I was asked to introduce the basics of linked open data, describe some relevant work in the international museums, libraries and archives sector and include examples of the types of data held by memory organisations. These are my talk notes, though I probably improvised a bit (i.e. rambled) on the day.

From strings to things

Linked Open Data in Libraries, Archives and Museums workshop, Victorian Cultural Network, Melbourne Museum, April 2012

Event tag: #lodlam

Mia Ridge @mia_out

Introduction

Hello, and thank you for having me. It’s nice to be back where my museum career started back at the end of the 90s.

I’ll try to keep this brief and relatively un-technical. Today is about what linked open data (LOD) can do for your organisations and your audiences. We’re focusing on practical applications, pragmatic solutions and quick wins rather than detail and three-letter acronyms. If at any point today people drift into jargon (technical or otherwise), please yell out and ask for a quick definition. If you want to find out more, there’s lots of information online and I’ve provided a starter reading list at the end.

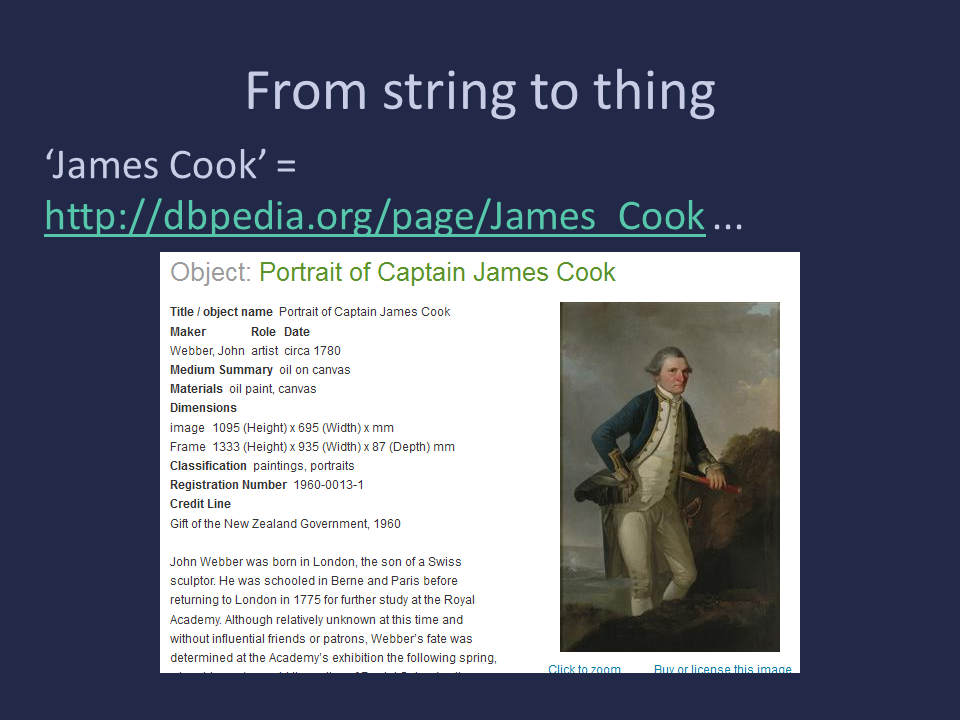

Why do we need LOD? (Or ‘James Cook’ = explorer guy?)

Computers are dumb. Well, they’re not as smart as us, anyway. Computers think in strings (and numbers) where people think in ‘things’. If I say ‘Captain Cook’, we all know I’m talking about a person, and that it’s probably the same person as ‘James Cook’). The name may immediately evoke dates, concepts around voyages and sailing, exploration or exploitation, locations in both England and Australia… but a computer knows none of that context and by default can only search for the string of characters you’ve given it. It also doesn’t have any idea that ‘Captain Cook’ and ‘James Cook’ might be the same person because the words, when treated as a string of characters, are completely different. But by providing a link, like the dbpedia link http://dbpedia.org/page/James_Cook that unambiguously identifies ‘James Cook’, a computer can ‘understand’ any reference to Captain Cook that also uses that link. (DBPedia is a version of Wikipedia that contains data structured so that computers know what kind of ‘thing’ a given page is about.)

So in short, linked open data is a way of providing information in formats that computers can understand so that they can help us make better connections between concepts and things.

The shiny version for visual people

[Europeana video: http://vimeo.com/36752317]

This video was produced by Europeana to help explain LOD to their content providers and gives a nice visual overview of some of the relevant technical and licensing concepts.

What is Linked Open Data?

In the words of LODLAM’s Jon Voss, “Linked Open Data refers to data or metadata made freely available on the World Wide Web with a standard markup format”[1].

LOD is a combination of technical and licencing/legal requirements.

‘Linked’ refers to ‘a set of best practices for publishing and connecting structured data on the Web’[2]. It’s about publishing your content at a proper permanent web address and linking to pre-existing terms to define your concepts. Publishing using standard technologies makes your data technically interoperable. When you use the same links for concepts as other people, your data starts to be semantically interoperable.

‘Open’ means data that is ‘freely available for reuse in practical formats with no licensing requirements’[3]. More on that in a moment!

If you can’t do open data (full content or ‘digital surrogates’ like photographs or texts) then at least open up the metadata (data about the content). It’s a useful distinction to discuss early with other museum staff as it’s easy to be talking at cross-purposes.

But beyond those definitions, linked open data is about enabling connections and collaboration through interoperability. Interoperable licences, semantic connections and re-usable data all have a part to play.

5 stars

In 2010 Tim Berners-Lee proposed a 5 star system to help people understand what they need to do to get linked data[4].

Some of these are quick wins – you’re probably already doing them, or almost doing them. Things get tricky around the 4th star when you move from having data on the web to being part of the web of data[5].

To take an example… rather than just hoping that a search engine will pick up on the string ‘James Cook’ and return the right record to the user typing that in, you can link to a URI that tells a search engine that you’re talking about Captain James Cook the explorer, not James Cook the wedding photographer, the footballer or the teen drama character. Getting to this point means being able to match terms in your databases to terms in other vocabularies, or making links to point to for terms unique to your project, but it means you’ve moved from string to thing.

Now you’ve got that fourth star, you can start to pull links back into your dataset. Because you’ve said you’re talking about a specific type of thing – a person identified by a specific URI – you can avoid accidentally pulling in references to the TV soap character or things held at James Cook University, or about cooks called James.

From string to thing

So James Cook has gone from an unknown string to a person embedded in a web of connections through his birth place and date, his associations with ships, places, people, objects and topics… Through linked open data we could bring together objects from museums across the UK, Australia, New Zealand, and present multiple voices about the impact and meaning of his life and actions.

What is LODLAM?

While there had been a lot of work around the world on open data and the semantic web in various overlapping GLAM, academic and media circles in previous year, the 2011 LODLAM Summit was able to bring a lot of those people together for the first time. 100 international attendees met in San Francisco in June 2011. The event was oganised by Jon Voss (@jonvoss) with Kris Carpenter Negulescu, Internet Archive and sponsored by the Alfred P. Sloan Foundation, National Endowment for the Humanities and the Internet Archive.

Since then, there have been a series of events and meetups around the world, often tying in with existing events like the ‘linking museums[6]’ meetups I used to run in London, a meeting at the National Digital Forum in New Zealand or the recent meetup at Digital Humanities Australasia conference in Canberra. There’s also an active twitter hashtag (#lodlam) and a friendly, low-traffic mailing list: http://groups.google.com/group/lod-lam.

4 stars

One result of the summit was a 4 star scheme for 'openness' in GLAM data… I’m not going to go into details, but this scheme was produced by considering the typical requirements of memory institutions against the requirements of linked open data. The original post and comments are worth a read for more information. It links ‘openness’ to ‘usefulness’ and says: “the more stars the more open and easier the metadata is to use in a linked data context[7]”.

Note that even 1 star data must allow commercial use. Again, if you can’t licence your content, you might be able to licence your metadata, or licence a subset of your data (less commercially valuable images, for example).

So, enough background, now a quick look at some examples…

Example projects

The national libraries of Sweden, Hungary, Germany, France, the Library of Congress, and the British Library have committed resources to linked data projects[8]. Through Europeana, the Amsterdam Museum has published “more than 5 million RDF triplets (or "facts") describing over 70,000 cultural heritage objects related to the city of Amsterdam. Links are provided to the Dutch Art and Architecture Thesaurus (AATNed), Getty's Union List of Artists Names (ULAN), Geonames and DBPedia, enriching the Amsterdam dataset”[9].

BBC

The BBC have been using semantic web/linked data techniques for a number of years[10] and apparently currently devote 20% of its entire digital budget to activities underpinned by semantic web technologies[11].

Online BBC content is traditionally organised by programme (or ‘brand’), but they wanted to help people find other content on the sites related to their interests, whether gardening, cooking or Top Gear presenters. For the BBC Music site, they decided to use the web as its content management system, and had their editors contribute to Wikipedia and the music database Musicbrainz, then they pulled that content back onto the BBC site.

The example shown here is the BBC Wildlife Finder, which provides a web identifier for every species, habitat and adaptation the BBC is interested. Data is aggregated from different sources, “including Wikipedia, the WWF’s Wildfinder, the IUCN’s Red List of Threatened Species, the Zoological Society of London’s EDGE of Existence programme, and the Animal Diversity Web. BBC Wildlife Finder repurposes that data and puts it in a BBC context, linking out to programme clips extracted from the BBC's Natural History Unit archive.”

They’re also using a controlled vocabulary of concepts and entities which are linked to dbpedia, providing a common point of reference across their sites.

British Museum

The British Museum launched a linked data service[12] in 2011.

In a blog post[13] they pointed out that their current interfaces can’t meet the needs of all possible audiences, but their linked data service “allows external IT developers to create their own applications that satisfy particular requirements, and these can be built into other websites and use the Museum’s data in real time – so it never goes out of date. … If more organisations release data using the same open standards then more effort can go into creative and innovative uses for it rather than into laborious data collection and cleaning.”

Civil War 150

Combining different datasets about the American Civil War, this site, Hidden Patterns of the Civil War[14], collects visualisations that allow you to explore maps of slave markets and emancipation, or to compare the language of Civil War era town newspapers on different sides of the issue. This is possible because standalone information and images can be connected and combined in entirely new ways. As one writer said, “Just the ability to search across historical collections is a radical development, as search engines typically aren't able to crawl databases. Part of what linked data does is expose metadata that's been pretty much hidden up until now”[15].

Key issues

There are lots of things to consider before publishing linked open data, but by putting them upfront it’s easier to identify which are real show-stoppers and which are just design issues. Concerns include loss of provenance, context, attribution, income…

GLAM data is messy – it’s incomplete, inconsistent, inaccurate, out of date – sometimes it’s almost non-existent. The backlog of data to be tidied up ‘one day’ is huge, so let’s find ways of sharing data before the data is perfect.

GLAMs should be in this space to help shape tools and datasets to their needs… The LODLAM 4 star system includes attribution because people there knew it was important. Sooner or later someone working on historical place names discovers that Geonames doesn’t work for changes over time, but we’re still working on a solution for that. Your average programmer may not realise that dates need to be recorded back to several millennia or precise BC dates, let alone all the issues we face around fuzzy and uncertain dates and date ranges…

Over to you…

Look at suggestions according to whether technical or licensing issues are easier for you to tackle…

Give people something to link to – publish open data, ideally with a non-commercial license. Linkable data is as important as linked – lots of datasets are being produced, but the power of this will really be felt when they can be connected to other data sets.